Spotlight

AWS Lambda Functions: Return Response and Continue Executing

A how-to guide using the Node.js Lambda runtime.

When AWS Lambda announced their new Provisioned Concurrency feature yesterday, I said it was “a small compromise with Lambda’s vision, but a quantum leap for serverless adoption.”

Lambda Provisioned Concurrency is here! I had a chance to experiment with the feature before release, and while there's no beating @theburningmonk's thorough review, I do have a few thoughts and caveats. 1/https://t.co/1DLsmUwvXu

— Forrest Brazeal (@forrestbrazeal) December 3, 2019

I’m not kidding about the quantum leap. Cold starts are consistently the #1 complaint among Lambda users, and I for one am thrilled to be having different conversations with enterprise clients who can’t necessarily wave a magic wand and refactor their legacy code for maximum serverless performance.

But I’m also serious about the compromise. Let’s be clear: Lambda provisioned concurrency is a step away from the classic Lambda model, because it’s no longer pay-for-usage.

You pay an hourly fee for each “hot spare” in the pool of provisioned concurrency underneath your function. This gets charged to you whether you are using the available compute or not.

Yan Cui, in his thorough breakdown of the ins and outs of provisioned concurrency, points out that provisioning can actually be cheaper than on-demand Lambda invocations if you are consistently using your provisioned concurrency near 100% capacity. But wait a second. The whole point of Lambda is that most workloads aren’t fully utilizing their compute capacity. Using provisioned concurrency puts us back in the game of rightsizing and capacity planning, one of the big things we want to avoid when we choose serverless in the first place.

This all gets weirder when you realize that Lambda provisioned concurrency is not provisioned compute. AWS guarantees that a warm function will be available up to the limit you specify, but they do not guarantee that it is always the same execution environment. Just like all Lambda functions, provisioned environments are recycled every few hours to apply updates, etc. (This is a good thing, by the way. It gets you out of the business of having to patch for the next Spectre and Meltdown.)

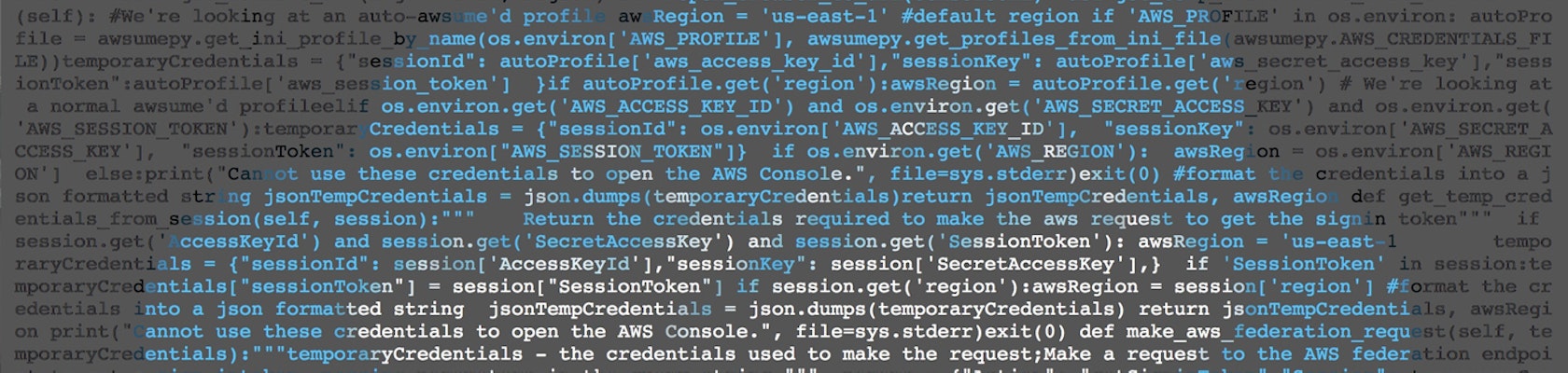

But that means that provisioned functions aren’t stateful; they can and will lose cached state. Let me show you what I mean with a small Python code example:

import json

count = 0

def lambda_handler(event, context):

global count

count += 1

return {

'statusCode': 200,

'body': json.dumps({"count": count})

}

When you run this code in Lambda the first time, cold start or provisioned, count will be 1. If you invoke the function several more times over the next few minutes, you’ll see a higher number printed each time as the counter increments itself. This is possible because all Lambda functions preserve a warm environment for some period of time after they start, meaning that global state can be reused — a feature commonly used for serverless caching strategies.

But at some point, even if the function is warm, and even if the concurrency is provisioned, the Lambda service will recycle this execution environment. In the provisioned concurrency world, the function will then be re-initialized in a new environment without you having to call it directly, running the global code outside your handler and resetting count to 0.

Interestingly, this re-warming event is visible in AWS X-Ray. If you ever wanted to see a Lambda function with a “running time” of an hour, here is your chance!

Note the “Initialization” time takes place over an hour before the actual function invocation.

So if I’m not paying for state with my per-hour billing charges, what exactly is Lambda provisioned concurrency giving me for my money? In a nutshell, it’s a managed version of the pre-warmers that Lambda users have been hacking together for years. The warm environment in between those occasional warming events is something that regular Lambda already gives me for free. But provisioned functions don’t even have a free tier.

With that said, I really wish AWS would revise the pricing model of provisioned concurrency to only charge for the warming events themselves. If you have provisioned concurrency of 50, and AWS has to run 50 scheduled initializations every two hours, then I should be charged only for those init events, not an opaque hourly fee. I can already see these events in X-Ray, so billing for them should be easy, right?

Make the “prewarmer” events 15% more expensive than a regular Lambda invocation if need be, to cover the overhead of scheduling or whatever other magic is happening. But don’t take away my scale-to-zero billing model. That’s what I came to Lambda for in the first place.

In the meantime, when does the compromise of provisioned concurrency make sense? When the impact of a cold start on your business is more expensive than either of these two things:

Since there are plenty of use cases where cold starts are hampering adoption under those criteria, I’m really glad provisioned concurrency is an option. But I’d be even happier if it adhered to the usage-based pricing that made Lambda revolutionary in the first place.

Trek10’s Adam Baker contributed research to this post.

A how-to guide using the Node.js Lambda runtime.