Spotlight

Demoing the Blues Wifi + Cell Communication Module

Explore the Blues Cell + Wifi communication module on a Raspberry Pi Zero, Notehub, and thoughts on the pros and cons of utilizing Blues in your IoT project.

Trek10 was one of seven launch partners for the AWS IoT Competency back in 2016, and we have worked on dozens of IoT deployments with clients across several verticals, including AWS IoT Basic Ingest. This has given us a deep understanding of the struggles involved in optimizing services for IoT device connectivity and data ingestion, which has led us to develop IoT Design Patterns that we use to tackle these issues with our clients.

In this post, I will describe three of the design patterns that we’ve used for processing incoming data from IoT devices, and I will discuss their tradeoffs in terms of cost, data retention, propagation delay and unique features.1

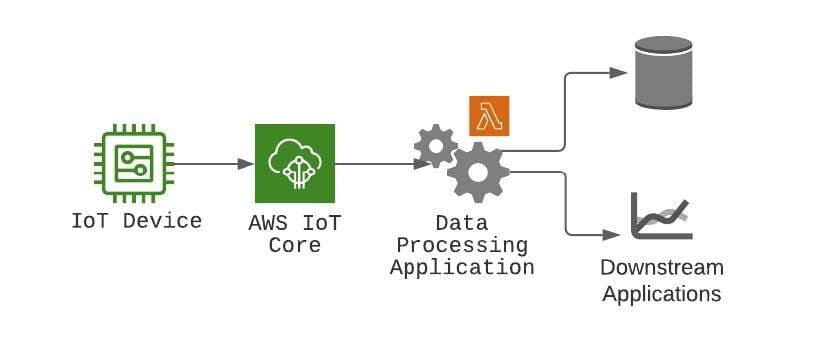

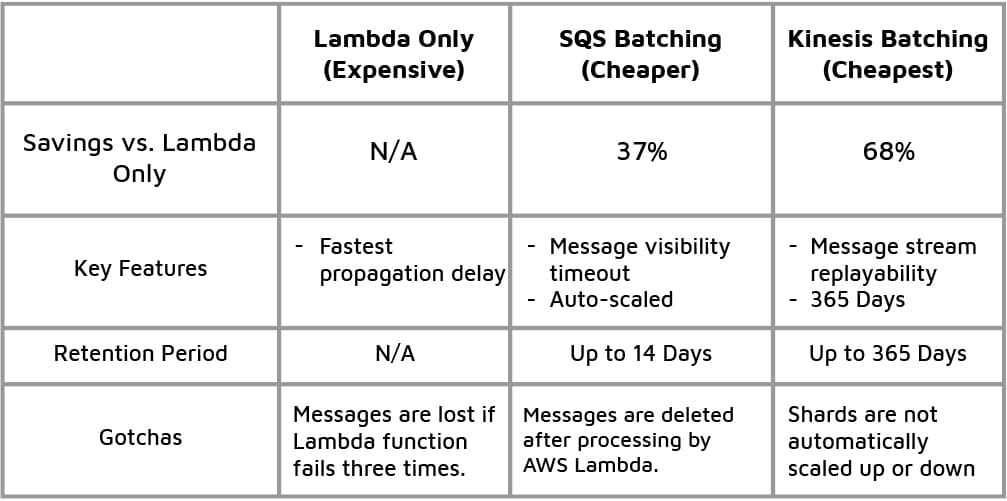

First up, let’s take a look at what is probably the most common design pattern that we see: IoT devices publish messages into AWS IoT Core and a rule sends the data directly to an AWS Lambda function for processing activities such as data validation, for example.

Although sometimes the data processing application is found as a container app or in a cloud server, AWS Lambda is a more typical choice for processing data because of its pay-per-use model and its autoscaling capabilities, which remove the need to deploy and maintain load-balancers.

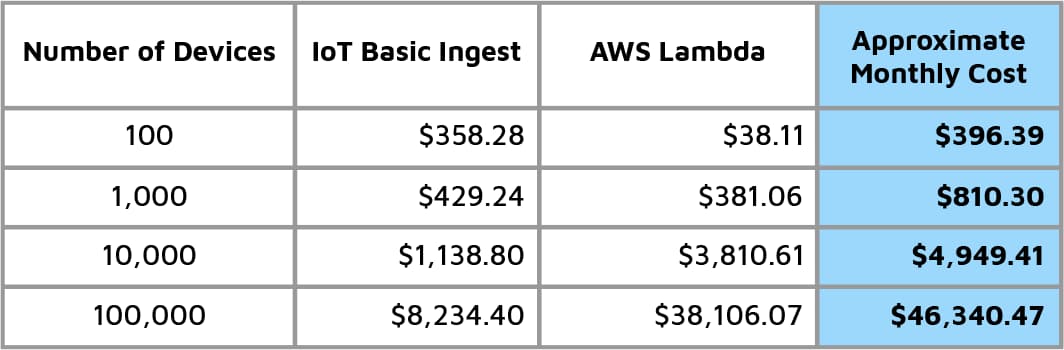

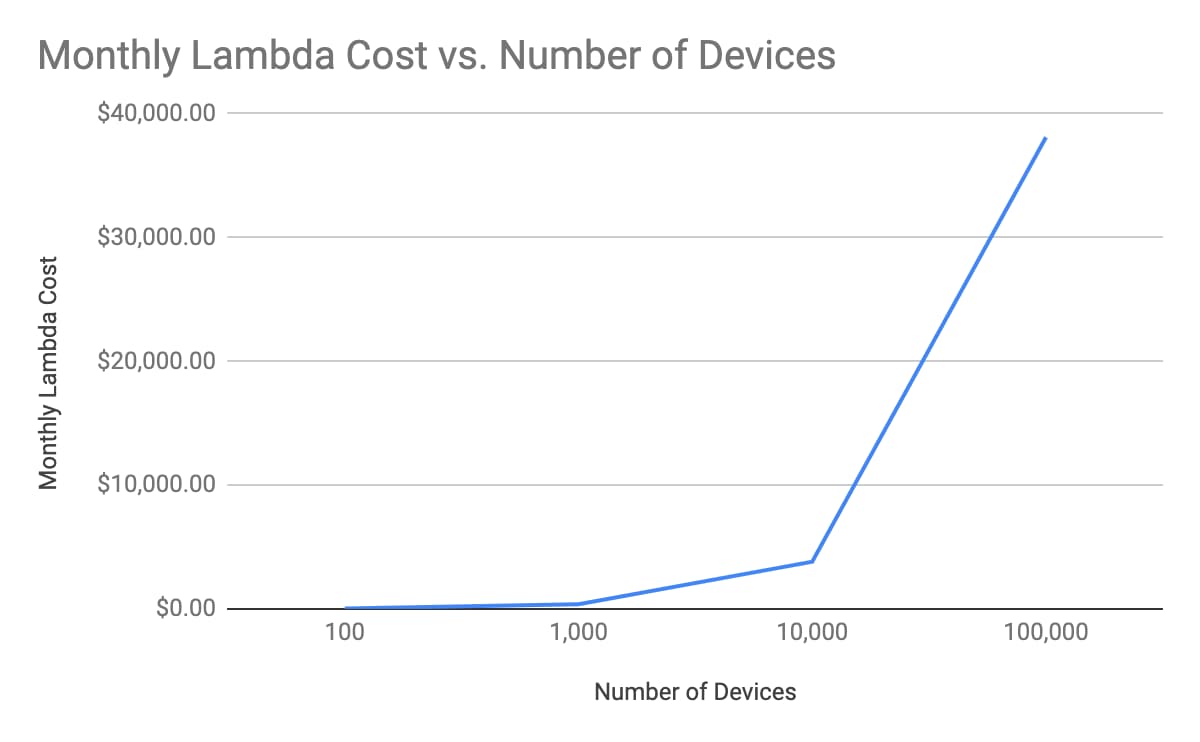

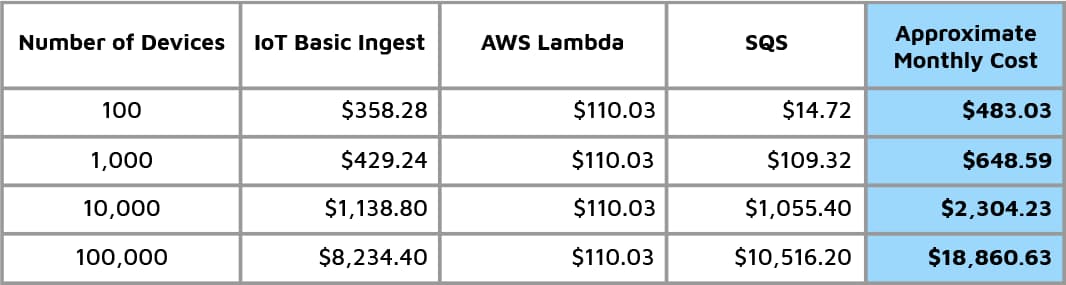

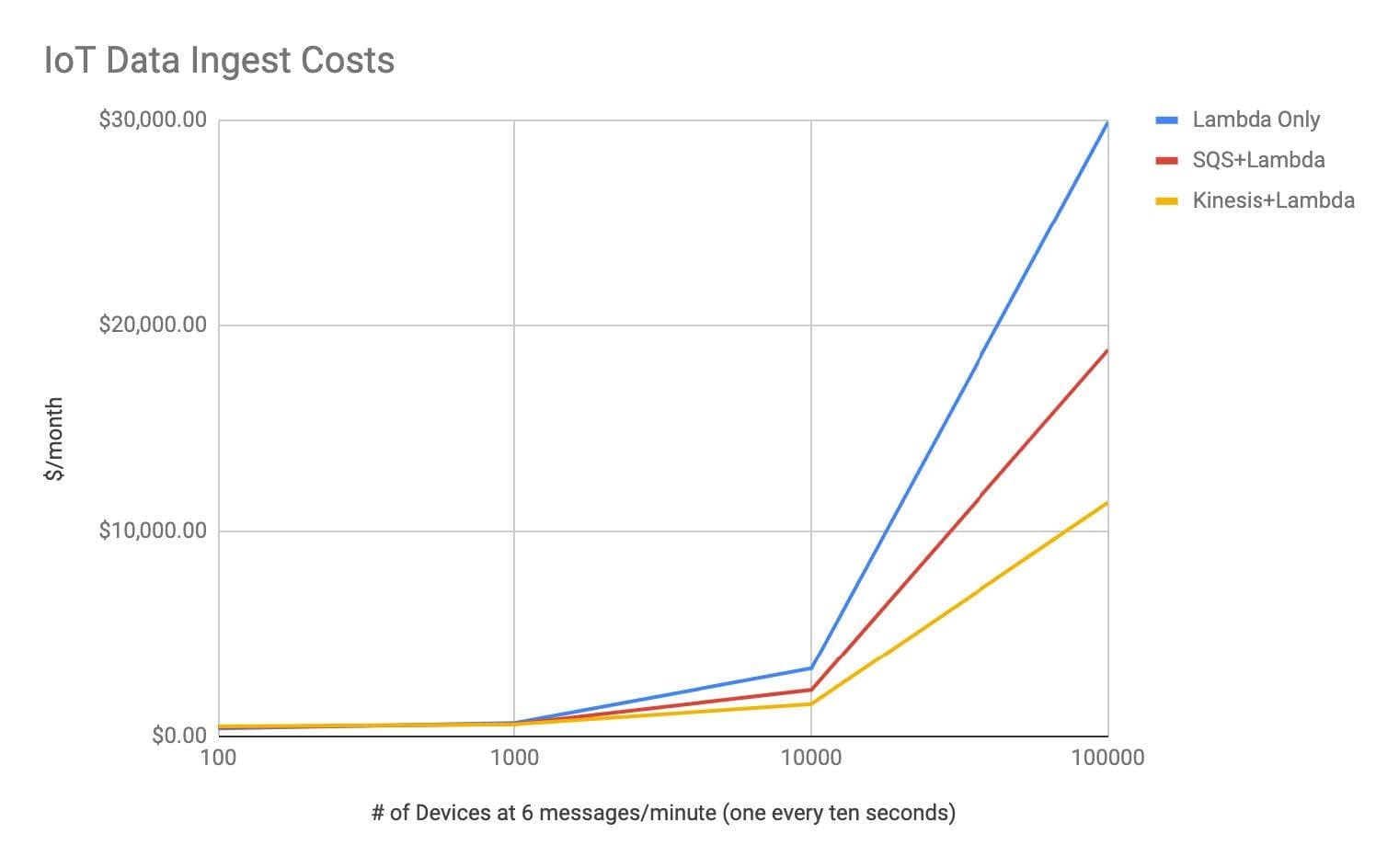

This straightforward design pattern is best suited for systems that need the lowest propagation delay for the incoming data: information is immediately processed upon arrival and made available to downstream services. That being said, if a Lambda function fails to process a message after three retries, it will be lost unless it is configured with a destination or Dead-Letter Queue. The cost for Lambda also increases quickly with higher amounts of data volume:

Table 1 - Costs of AWS IoT Basic Ingest + AWS Lambda with 256MB of memory and 300ms average execution time–graphed in the following chart.

So what can we do about these swelling costs? There are two methods we can use to temper them: SQS Batching and Kinesis Batching.

We are AWS experts and love building solutions for our customers.

Let's get started today!

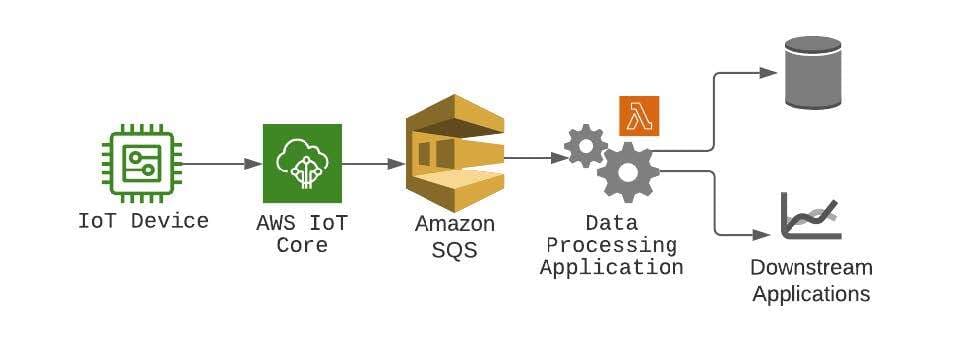

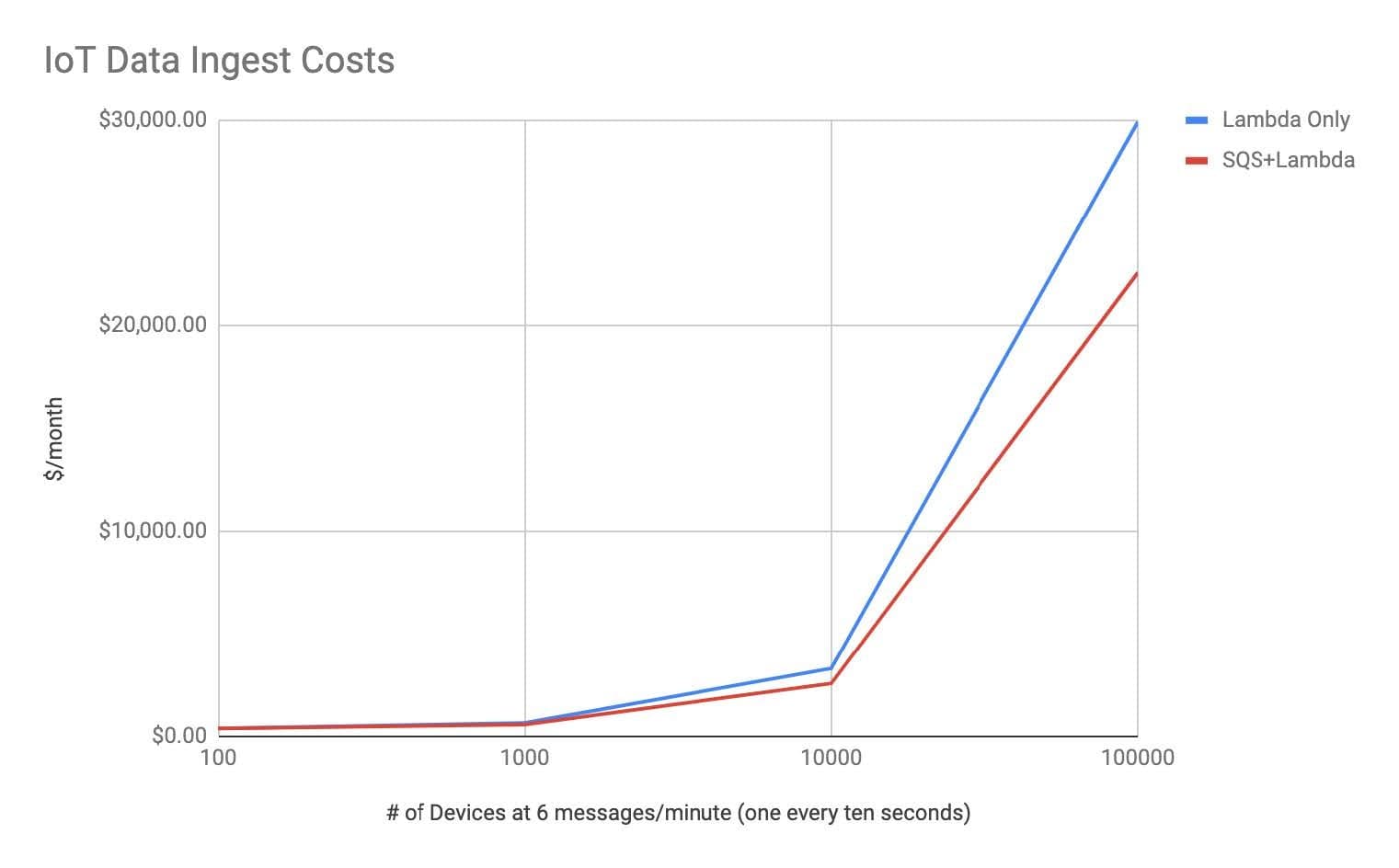

The second pattern consists of inserting an SQS queue between AWS IoT Core and AWS Lambda. Batching device messages in this way allows the Lambda function to process multiple messages at once, which results in up to a 37% cost reduction at higher volumes. Furthermore, SQS provides some useful messaging capabilities like message locking, auto-scaling, and 14-day message retention.

Naturally this introduces some amount of propagation delay. Specifically, this can be configured through an event source property which specifies how long AWS Lambda will gather SQS records before processing them, and it ranges between 0 and 300 seconds.2 Ultimately you can expect propagation delay to increase by at least a fraction of a second given the nature of message batching, but it’s up to your requirements whether that is tolerable or not.

The costs for SQS Batching are outlined and compared with the Lambda only pattern below:

Table 2 - Monthly costs of using the SQS Batching pattern–contrasted to using a Lambda function alone in the following chart.

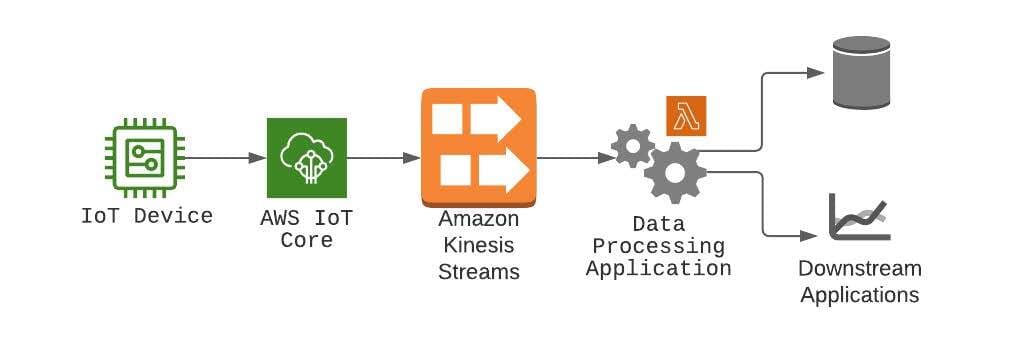

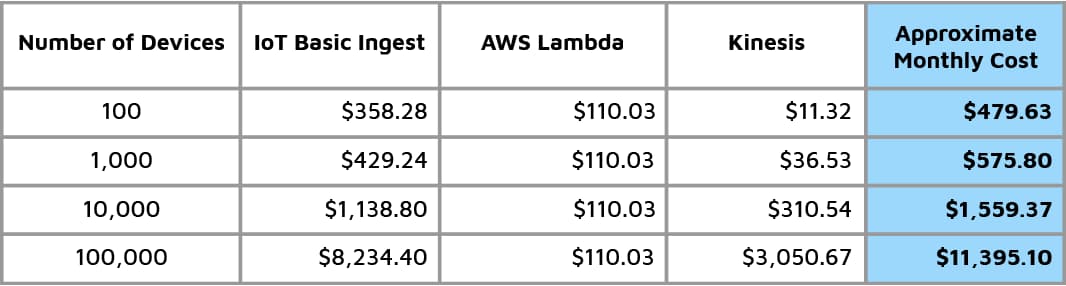

The last option we will explore for managing IoT data ingestion costs is Kinesis Data Streams. By placing a data stream between AWS IoT Core and AWS Lambda, the data can be batched similarly to the SQS Batching pattern. This results in up to a 68% cost reduction versus using AWS Lambda alone, or up to 40% versus the SQS batching pattern. Kinesis provides up to a 365-day stream retention period and also gives you the ability to replay a stream of messages in order multiple times—by contrast, to get ordered messages in SQS you’ll have to pay for FIFO queues, and replayability is not intuitively supported.

The issue of propagation delay is similar to what you get when using SQS, and you can also configure the amount of time that Lambda should wait to batch messages (known as the batch window).

Below we list the cost of Kinesis Batching and compare it with the two previous design patterns:

Table 3 - Costs of using the Kinesis Batching pattern. This is contrasted to using a Lambda function alone and SQS batching in the following chart.

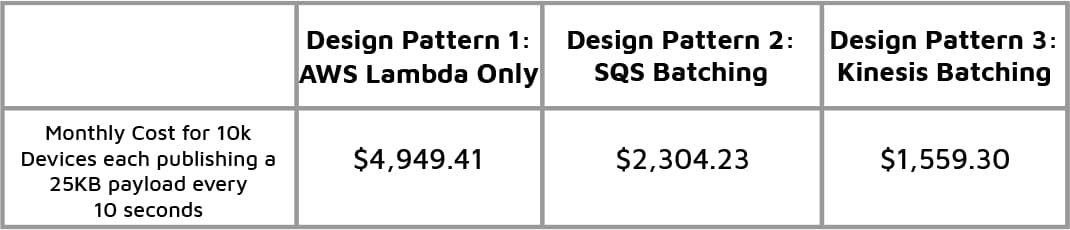

We have explained three design patterns used to ingest data from AWS IoT Core and compared their cost, data retention, propagation delay, and key features. There is much we haven’t covered like in-depth OnError Destinations for Lambda or maximum payload sizes, but this survey should give you a decent idea of how our three main IoT message processing patterns compare to each other. We summarize their costs and characteristics side-by-side in the two tables below.

Table 4 - Design Pattern Costs for 10,000 Devices

Table 5 - Design Patterns’ Savings, Features, and Retention Period

Explore the Blues Cell + Wifi communication module on a Raspberry Pi Zero, Notehub, and thoughts on the pros and cons of utilizing Blues in your IoT project.