Spotlight

How to Use IPv6 With AWS Services That Don't Support It

Build an IPv6-to-IPv4 proxy using CloudFront to enable connectivity with IPv4-only AWS services.

FinDev and the flow of capital through an application is a hot topic these days — Yan Cui and Aleksandar Simovic both have recent discussions on the topic. The pay-by-request model of most serverless services makes it possible to generate detailed models of how much each additional user or transaction costs an application. In Lyft’s recent S-1 filing they declared a total annual cost for their future AWS usage (2019-2021) of $80 million.

The table does not reflect the January 2019 addendum to the AWS arrangement under which the parties modified the aggregate commitment amounts and timing. Under the amended arrangement, we committed to spend an aggregate of at least $300 million between January 2019 and December 2021, with a minimum amount of $80 million in each of the three years, on AWS services.

$80 million is a huge amount of money, but broken down as a per-unit cost, it comes out to $0.14. As part of the cost of goods sold (COGS) that means the infrastructure costs are approximately on-par with what a payment processor would take on a credit card transaction. This sort of framing is important for understanding your cloud bills in terms of the cost per user.

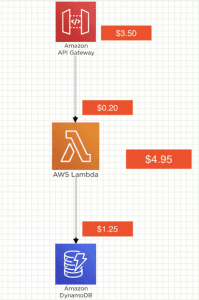

In Aleksandar’s article he uses this chart to illustrate the costs of each application component, and this is what piqued my interest in DynamoDB pricing - the little $1.25/million box.

DynamoDB has, since it’s inception, added 3 additional pricing models on top of the original provisioned-capacity model. For anyone whose life doesn’t involve obsessive reading of AWS announcements, let’s review your DynamoDB pricing options.

On-Demand is the simplest pricing model around - you pay for storage and requests, and that’s all. No capacity planning or prediction. You pay $1.25 per million writes, and $0.25 per million reads. This is where “simple” ends in this post.

The first and still most common pricing method for DynamoDB is pay-per-capacity. This isn’t quite pay-per-usage but it’s close. You pay to provision a certain throughput for your DynamoDB table, say 100 Read Capacity Units (RCUs) which gives 100 strongly-consistent 4KB reads per second. The costs for provisioned capacity break down differently because you are paying for the capacity whether or not it is used, meaning this isn’t true pay-per use.

Additionally, DynamoDB gives you an incentive to over-provision to avoid being throttled. If you consume more than the provisioned 100 RCUs in a second, DynamoDB can deny further requests which (to an end user) makes the data appear unavailable. Depending on your app, this can be ok or can be worked around with caches, retries, or other techniques.

To determine cost-per-million requests, we can do the math to find out how many requests we can get for a single RCU-hour at $0.00013. With 3600 seconds in an hour, that is $0.0000000361 per read, or $0.0361 per million. This isn’t looking good for the $0.25/million we pay for DynamoDB On-Demand.

Much like Reserved Instances in EC2, reserved capacity in DynamoDB lets you get a discounted price for committing to a certain amount of usage up front. The cost is the same units as Provisioned Capacity, but you buy one or three-year reservations in units of 100 RCUs or WCUs.

Compared to standard provisioned capacity, you save 53% on one-year reservations or 76% on three-year reservation. These reservations are a billing construct only, so you use provisioned capacity as normal and the savings are automatically applied. For comparison, that’s $0.0166/million for one-year reservation and $0.0083/million for three-year reservation.

Because real-world applications rarely have flat usage, to save costs it is necessary to scale DynamoDB Provisioned Capacity up and down with your usage. DynamoDB Autoscaling adds automated response to CloudWatch metrics to increase and decrease capacity as your demands change.

Nothing in life is free, and you’ll be paying for DynamoDB Autoscaling in two ways:

Autoscaling costs aren’t scaled by the number of transactions. For a table that is provisioned for 10 RCU and 10 WCU and two GSIs you pay $1.60 for CloudWatch alarms on $5.81 of DynamoDB capacity.

At the low end of usage, AutoScaling wipes out much of the cost savings you get over making the table On-Demand pay-per-use. If your DynamoDB spend is on the order of hundreds of dollars, the percentage cost of these alarms is insignificant compared to the potential savings from paying for less over-provisioned capacity.

Paying for idle is the bane of any capital-flow type pricing. Autoscaling helps you track the provisioned capacity (amount you pay for) to your utilization.

To compare the different DynamoDB pricing models, we break down the different models into a common unit - the cost per million Read (and Write) Capacity Unit. To account for the fact that On-Demand is pay-per-usage, we have to derive the cost per request at different utilization factors.

| Capacity Type | RCU Hourly cost | CPM RCU | CPM @ 50% Util | % savings @ 50% Util | % savings @ 20% Util |

|---|---|---|---|---|---|

| On-Demand | 0.0001800 | $0.2500 | $0.2500 | -246.15% | -38.46% |

| Provisioned | 0.0001300 | $0.0361 | $0.0722 | 0.00% | 0.00% |

| Reserved 1 year | 0.0000597 | $0.0166 | $0.0332 | 54.06% | 54.06% |

| Reserved 3 year | 0.0000299 | $0.0083 | $0.0166 | 77.01% | 77.01% |

Looking at the RCU table, it’s obvious that you pay a premium for On-Demand. At 100% utilization, you pay six times more for a million On-Demand reads than for the same provisioned reads.

For real-world usage, 100% utilization is a pipe dream so we need to try the same calculations with more realistic levels. If usage is spiky enough that you are using 50% of the provisioned capacity, the savings from provisioned look less extreme. You pay 250% more for On-Demand still, but the capacity planning burden is shifted to AWS and you’re able to focus on other work.

| Capacity Type | WCU Hourly cost | CPM WCU | CPM @ 50% Util | % savings @ 50% Util | % savings @ 20% Util |

|---|---|---|---|---|---|

| On-Demand | $0.00090 | $1.2500 | $1.2500 | -246.15% | -38.46% |

| Provisioned | $0.00065 | $0.1806 | $0.3611 | 0.00% | 0.00% |

| Reserved 1 year | $0.00030 | $0.0838 | $0.1676 | 53.60% | 53.60% |

| Reserved 3 year | $0.00015 | $0.0418 | $0.0836 | 76.85% | 76.85% |

The story is much the same for WCUs, the difference between DynamoDB WCU cost savings and RCU savings is less than a percent in most cases. For an extremely unpredictable or a badly planned DynamoDB architecture, On-Demand capacity is only 38% more expensive than provisioned capacity at 20% utilization.

Development environments are the clearest beneficiaries of DynamoDB on demand scaling. When you only use an environment for testing, it’s great to only pay for storage if no tests are run. Cheaper test environments mean you can create more of them, and support each developer having their own isolated environment without runaway costs.

Critically important environments are another beneficiary. With On-Demand pricing you completely eliminate throttling based on the provisioned capacity. To do this without On-Demand you may have to provision double the base load on all tables and GSIs to ensure spikes don’t outstrip the burst capacity limits of your tables or indexes. Any GSI can cause table writes to be throttled if it runs out of capacity.

When using sparse indexes the management challenge increases because different indexes can have wildly different capacity requirements and can easily be over-provisioned.

When weighing the different pricing models, there are a few rules of thumb that can be used to shortcut the cost comparison process.

Avoiding the complexity of capacity planning is one of the most compelling aspects of DynamoDB On-Demand. Hopefully with this pricing analysis in hand, you can now weigh intangible and personnel costs against the infrastructure cost and make the decision for your environment.

Build an IPv6-to-IPv4 proxy using CloudFront to enable connectivity with IPv4-only AWS services.