Spotlight

How to Use IPv6 With AWS Services That Don't Support It

Build an IPv6-to-IPv4 proxy using CloudFront to enable connectivity with IPv4-only AWS services.

Let’s say your Python app uses DynamoDB and you need some unit tests to verify the validity of your code, but you aren’t sure how to go about doing this — perhaps you’re already using Moto in this project or you’ve heard of the botocore stubber, but you have a hunch that there might be a better way to write your DynamoDB-related unit tests. You’d be correct in this case, as there exists something known as “DynamoDB Local” which will make your life easier.

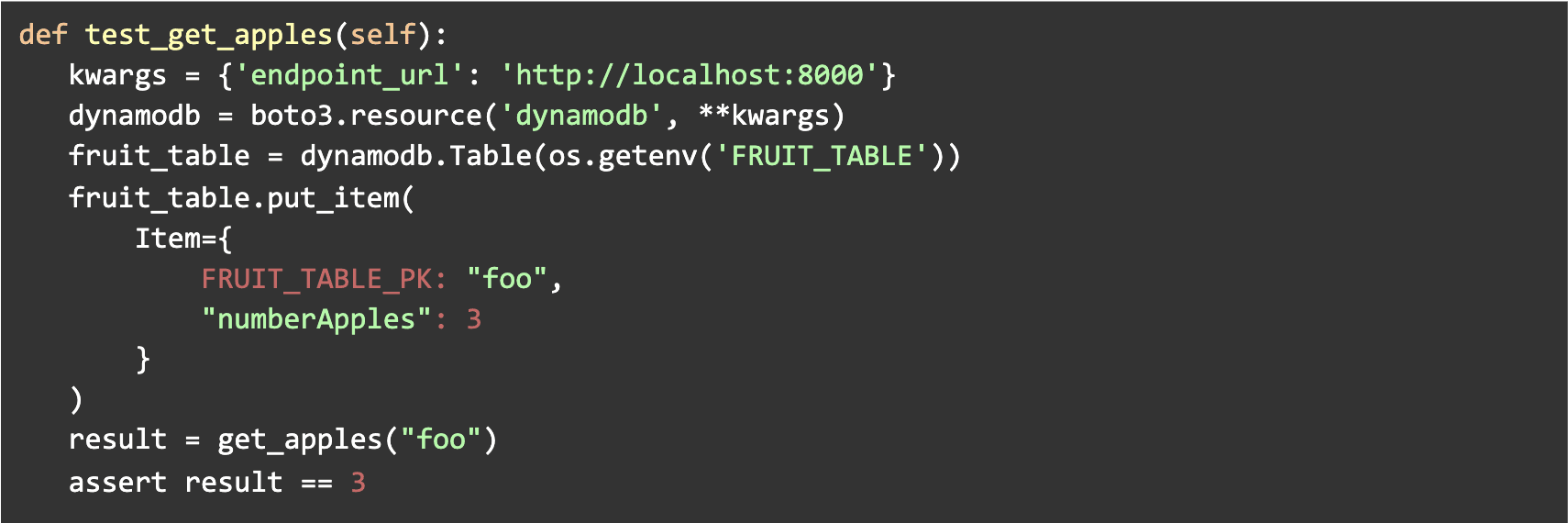

DynamoDB Local works via emulating a DynamoDB endpoint on your machine. This allows for any/all api calls to be directed to a local endpoint rather than actual DDB tables on AWS. Let’s dive right into what your unit tests will look like for testing the function get_apples.1

Notice that we did not have to mock the get_item call that was assumedly made by the “get_apples” function. Because we have local dynamodb running for this unit test (note that it must be started by some script before your unit tests), we can skip all mocking of the dynamodb calls.

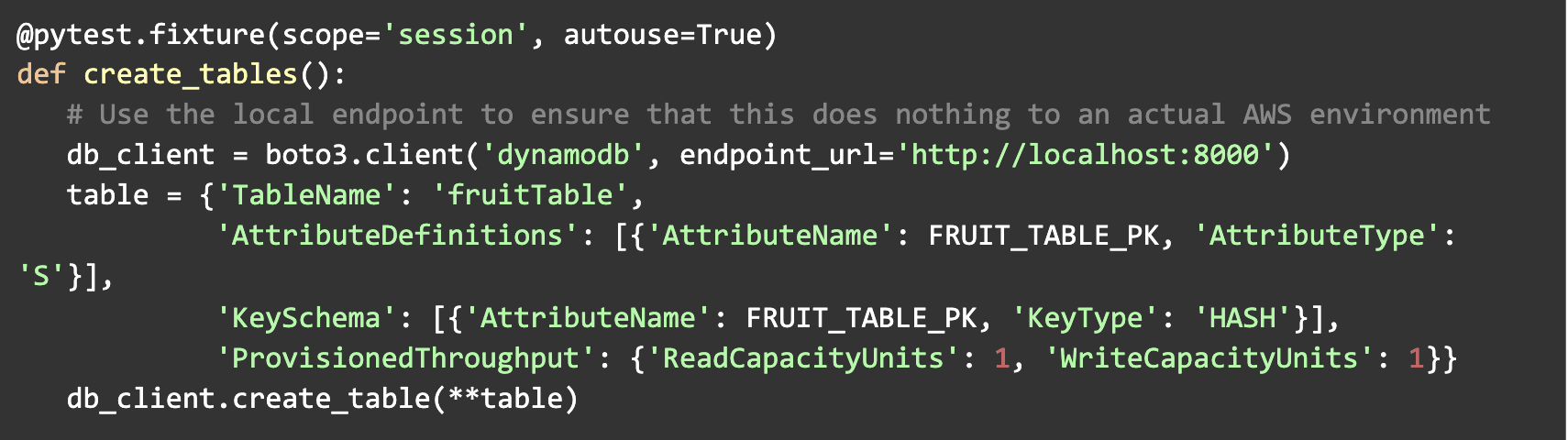

“But how do we set up the project to allow for such easy testing?” you may ask. The answer is simple: we create the needed tables before running the unit tests and all of our application logic will dynamically point to the correct DynamoDB endpoint.

Create the tables using a conftest.py file (assuming you’re using pytest):

1Function definition intentionally omitted.

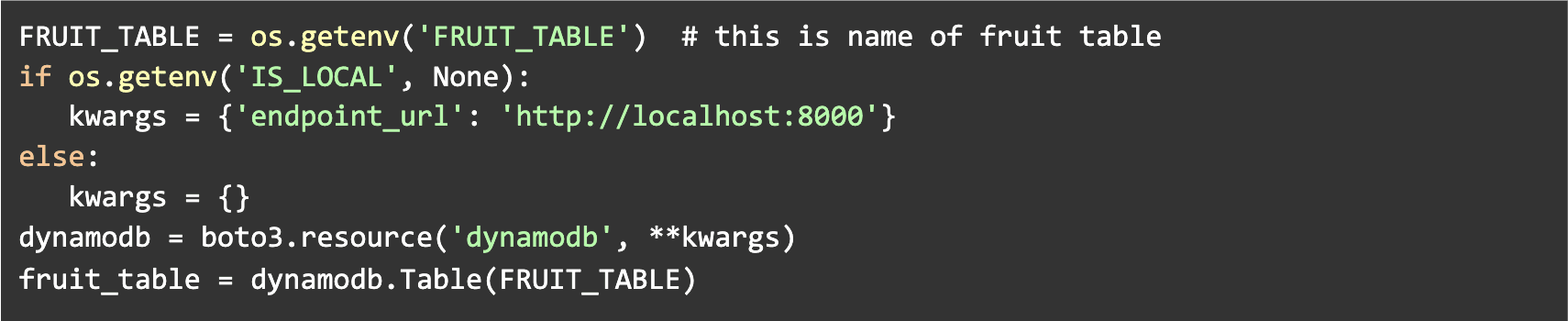

Point to the correct DynamoDB in your business logic using something like this:

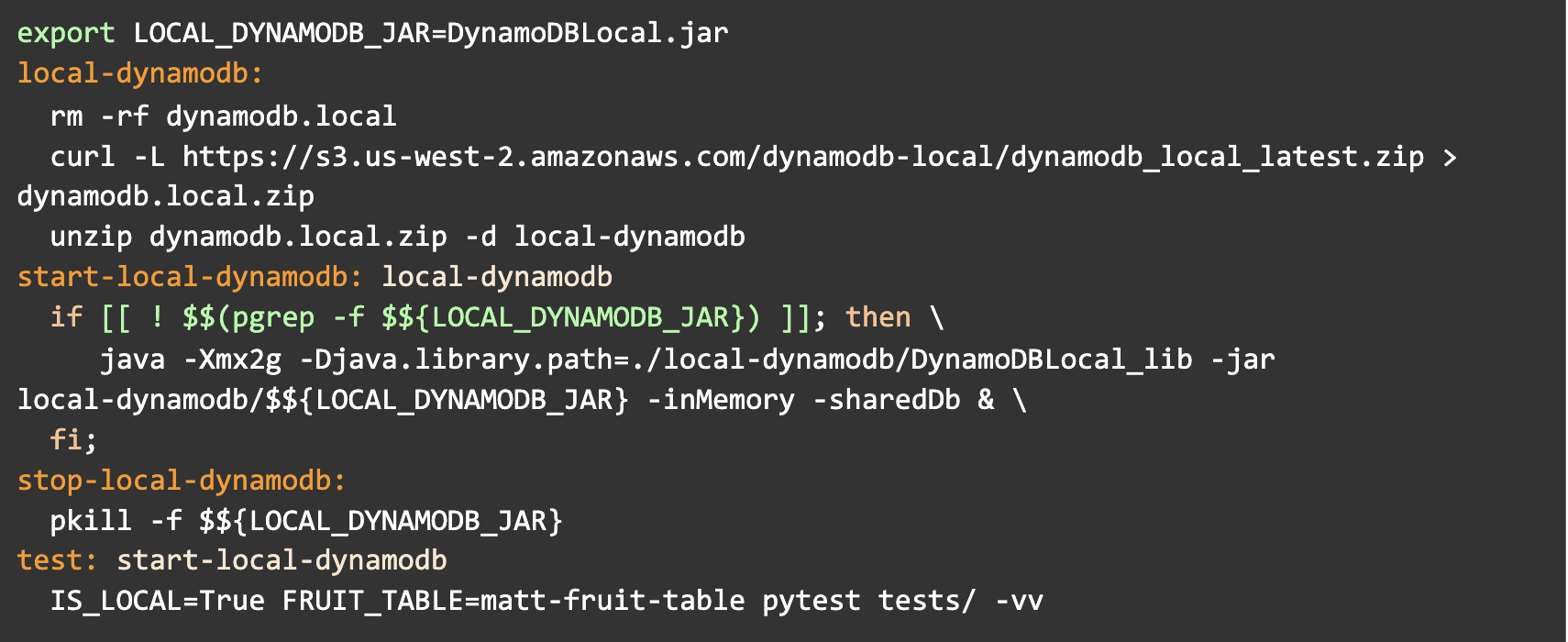

As mentioned before, you’ll probably want to have some script that starts the local DynamoDB before running your unit tests. Using a Makefile is a good idea for this:

A big takeaway here is that, rather than painfully mocking out all of your DynamoDB calls, you can just interface with the local DynamoDB in your unit tests as though it is the same as the “actual” DynamoDB running in AWS. You may simply put whatever information you need into the database prior to testing your logic and it will be there waiting to be fetched by your application code. This feels much nicer and easier than alternative methods.

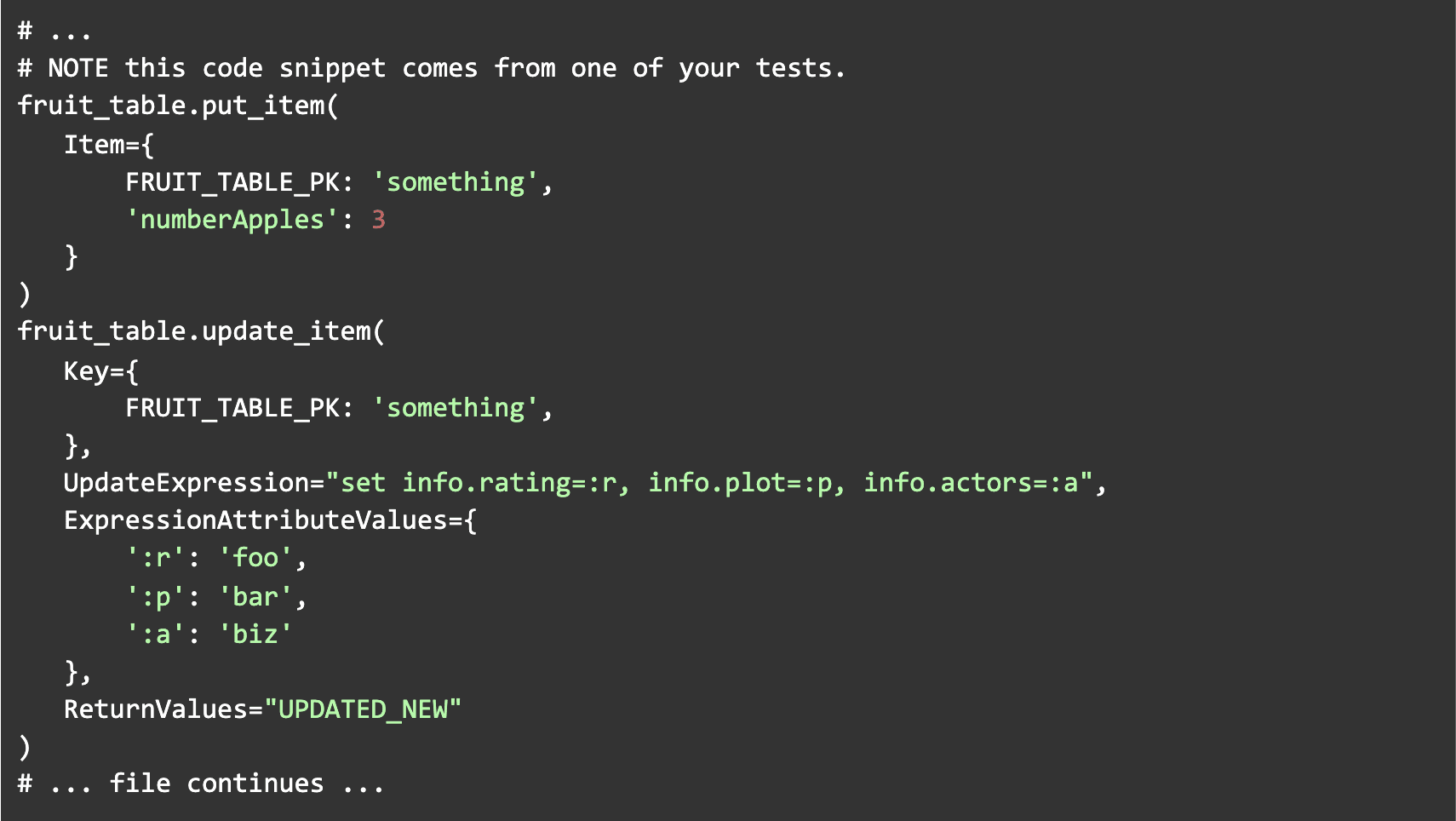

In addition to local DynamoDB being easier than mocks and/or the botocore stubber, it also surpasses those approaches in regards to checking your primary key attributes, as well as any put/update calls, for their validity. Namely, if your DynamoDB calls happen to involve an incorrect primary key [and you are using local DynamoDB], you will get an error message such as “An error occurred (ValidationException) when calling the GetItem operation: One of the required keys was not given a value.” In contrast, when using mocked objects or the botocore stubber, you will not be made aware that an incorrect primary key was used. On a similar note, put/update calls may involve expressions that are highly error-prone that will not be checked by the botocore stubber (see example below).

Considering that there is no “info” object on the record we are updating, the code shown above will result in an error such as “The document path provided in the update expression is invalid for update” to be raised when the tests run [while using local DynamoDB]. Botocore stubber will not execute the update expression to ensure its validity, so you will not be notified of this kind of mistake and your tests will be less useful.

We hope this has been useful for you where you are and makes your validation efforts easier. If Trek10 can help you with your Python & DynamoDB development, our team will be happy to help!

Build an IPv6-to-IPv4 proxy using CloudFront to enable connectivity with IPv4-only AWS services.