Spotlight

ABAC vs RBAC for Access Control in AWS

Explore how Access Controls can protect your sensitive information from unauthorized access.

Wed, 14 Aug 2019

Here at Trek10, several clients have asked us what they can do to help avoid a breach similar to the very public and recent Capital One breach. After researching the topic and gathering the data points over the last couple of weeks, we have pulled together some recommendations. Before jumping in, note that this blog post is not a deep dive on what happened in the actual attack. We will do a quick review, but this post is intended to serve as a guide on what actions enterprises can take, in their AWS environments, to mitigate the risk of this type of attack.

As always, Brian Krebs provides a great overview and more detail in a follow up. The indictment itself is also a great read!

As is often the case with a breach, there are two components to the attack — there is the initial attack on the perimeter, followed by lateral movement across the environment for the data exfiltration. In this case, the initial attack occurred when a public-facing EC2 instance, hosting the open source Modsec WAF, was breached. The vulnerability that allowed this initial breach is unknown, which means a virtually infinite number of possible vulnerabilities could have allowed the attacker in. Based on the coverage, it’s very unlikely that this was a zero day vulnerability, which means it probably could have been avoided with appropriate security controls.

Once the attacker has breached the EC2 instance, all AWS services that the EC2 instance has access to via the IAM policy of the IAM role that is attached to the EC2 instance profile (known as “*****-WAF-Role” in the indictment) are now available to the attacker via AWS’s metadata service.

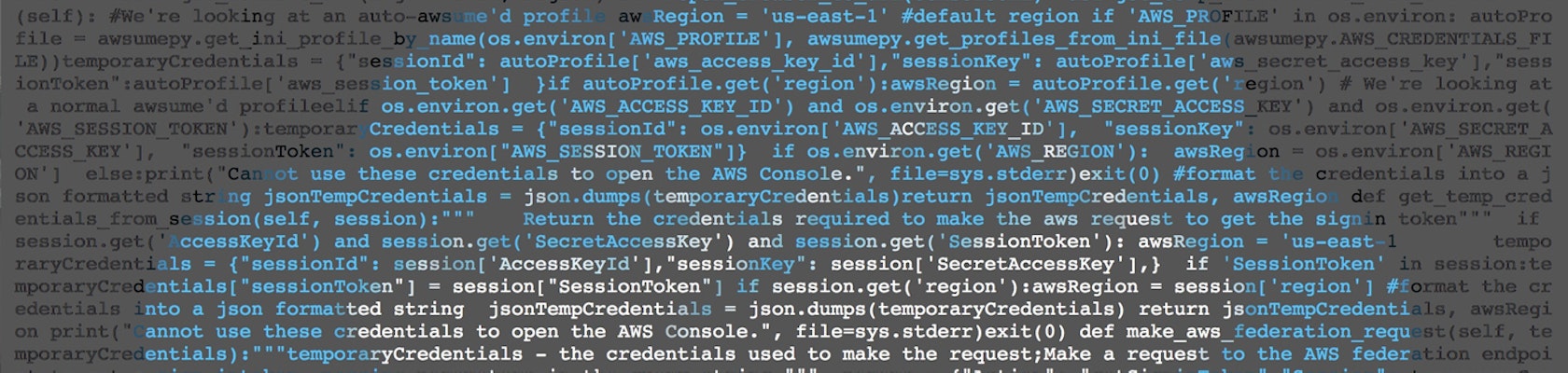

This could easily break off into an entirely separate blog post, but we’ll try to make it quick! Since the attack, much controversy has surrounded AWS’s metadata service (which allows any user with terminal access to an instance to query http://169.254.169.254/latest/... to gain access to temporary IAM credentials.

The metadata service provides AWS IAM credentials for EC2 instances to access other AWS services without having to manually create IAM access keys and hardcode them into your application -- this is a huge security win. Due to this attack, EC2 roles and the AWS metadata service have been widely criticized as major AWS vulnerabilities; we feel this is far from the truth.

In reality, this service allows for automated key rotation and removes the requirement of hard-coding access keys into application code in order to access AWS services. Are there ways that Amazon could improve the security of the metadata service? Of course, security should always be improving, but the removal of this service altogether would lead to far less secure applications.

These AWS services are accessible both from the terminal of the EC2 instance, as well as from the attacker’s local environment outside the instance… but the keys are temporary, rotated automatically multiple times per day, and will quickly expire if used locally).

Interestingly, the data exfiltration was completed from a VPN provider’s IP address using the S3 Sync command. GuardDuty, AWS’s threat detection service, provides a high severity notification if the credentials associated with an EC2 instance profile are used outside of the EC2 instance. At Trek10, we integrate GuardDuty into our ticketing system (both internally and for clients) such that high severity alerts create a ticket for review.

One of the key discussion points related to the breach is around whether or not the IAM policy that was attached to *-WAF-Role was overly permissive. It is not clear why the Modsec instance required access to S3. We have not found any public details related to this, but given that we know the attacker ran the ListBuckets command and then the Sync command, we can assume that the IAM policy of the role looks something like this (though it could have very well been scoped down to specific S3 buckets):

{ "Effect": "Allow", "Actions": [ "s3:ListBuckets", "s3:ListAllMyBuckets", "s3:ListBuckets", "s3:GetObject" ], "Resource": "*"}This section outlines an overview of recommendations for your AWS environment, based on the attack, as well as some details on how Trek10 implements best security practices into its CloudOps offering. The recommendations are broken down based on whether the suggestion mitigates risk from the initial attack on the perimeter or on the lateral attack that allowed the S3 data exfiltration. We will start with the lateral attack, as that is far more interesting due to the public information available!

Remember that AWS IAM is an extremely powerful service (one that we take very seriously at Trek10 — just check out our approach to IAM escalation to understand how seriously!). The key takeaway from this breach is to understand that the power of AWS IAM means that organizations need to take the principle of least privilege very seriously and ensure that all IAM entities are scoped appropriately. Below are some recommendations based on the breach:

You may even find that there are numerous IAM entities that are not being used, remove them by first deactivating any keys to make sure nothing breaks, then DELETE THEM!

GuardDuty should be integrated into your ticketing system, as we do for our clients. We also provide the option of notifying on high severity results only, for example, to reduce noise.

Because Amazon Macie is quite expensive, we are experimenting with some different approaches to detecting and preventing S3 data exfiltration for our clients (for example, if the S3 API calls a certain percentage of all S3 objects in an account, cut off access to the authenticating IAM entity until a manual approval is provided).

Because the public knows so little about that the initial attack on the EC2 instance, these “mitigations” are just general good EC2 security practices — with a particular focus on how an organization can utilize AWS’s vast arsenal of security services to protect EC2 instances:

Trek10’s CloudOps service integrates a wide variety of AWS security services into its ticketing system and automates the deployment with pre-built templates. Additionally, Trek10 is in the process of building an advanced, cloud native security detection and prevention service. We are combining AWS native security services and the absolute best 3rd party commercial and open source security tools for full protection of EC2 instances and cloud native AWS services. We are partnering with Sophos Cloud Optix for this offering, and we are excited to release this service later this year. Be on the look out for a future blog post!

Explore how Access Controls can protect your sensitive information from unauthorized access.